Freeform Robot

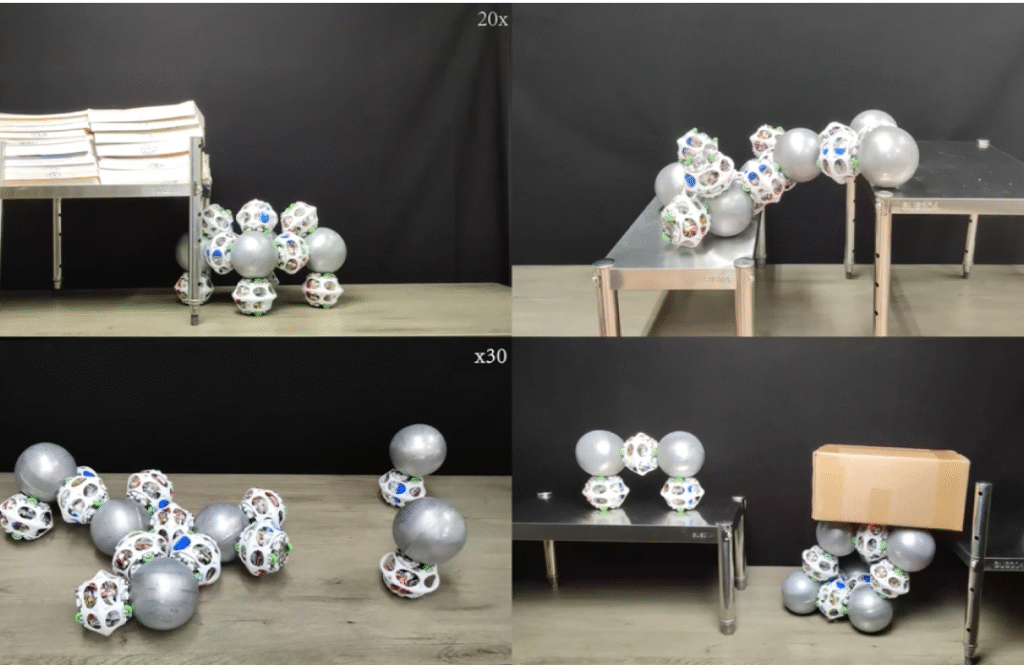

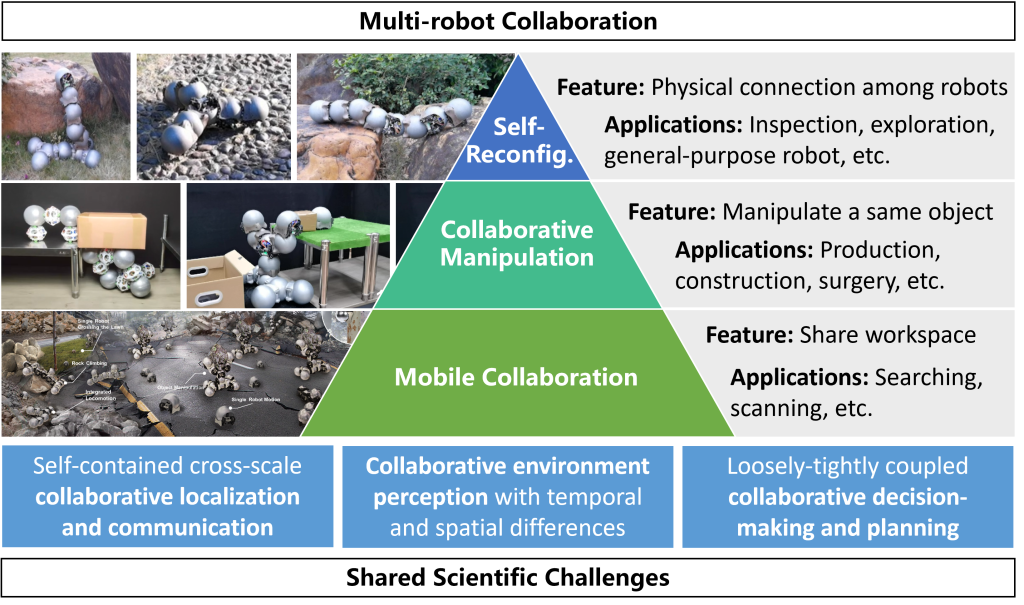

Freeform modular self-reconfigurable robotic systems offer exceptional self-adaptation, self-healing, and multifunctionality, enabling robot teams to dynamically assemble, disassemble, and reconfigure into arbitrary shapes and structures to address diverse tasks in complex, unstructured, and dynamic environments. Unlike traditional modular robots constrained by fixed docking points or lattice-based geometries, this project pioneers freeform connectivity—allowing arbitrary, omnidirectional attachment at any point on module surfaces—breaking limitations in mechanical structure, relative positioning, and motion planning for real-world deployment. These developments provide a theoretical and technical foundation for general-purpose robotic swarms—capable of on-demand multifunctional applications—and support broad applications in search and rescue, space exploration, disaster response, environmental monitoring, and industrial inspection.

Media Coverages:

Metal Spheres Swarm Together to Create Freeform Modular Robots – IEEE Spectrum

Magnetic FreeBOT orbs work together to climb large obstacles – Engadget

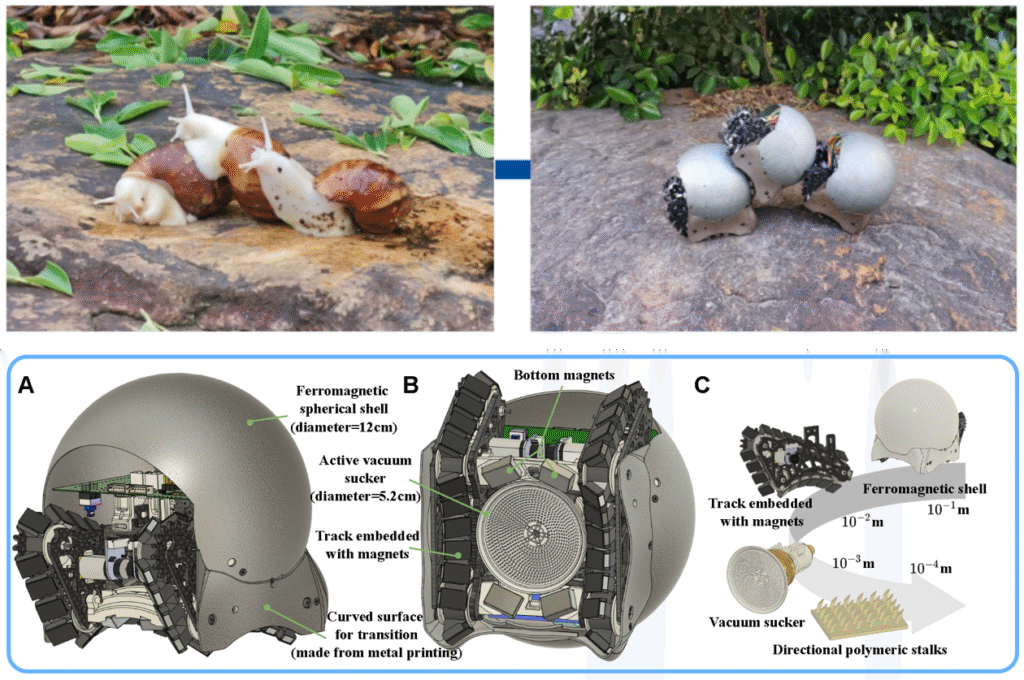

Video: Snail-inspired swarm robots cooperate to build structures on demand – Interesting Engineering

Selected Publications:

- Guanqi Liang, Auke Jan Ijspeert, Mark Yim, Tin Lun Lam, “Modular Reconfigurable Robots: Towards On-Demand Multifunctional Applications,” Science Robotics, February 2026. [paper]

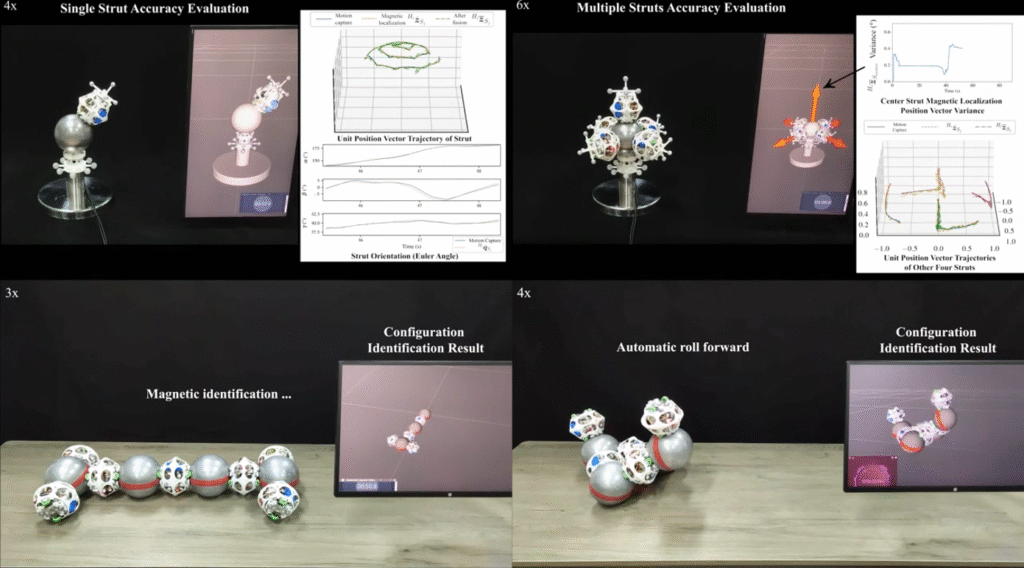

- Yuxiao Tu, Guanqi Liang, Di Wu, Xinzhuo Li, Tin Lun Lam, “Locomotion and Self-reconfiguration Autonomy for Spherical Freeform Modular Robots,” International Journal of Robotics Research (IJRR), July 2025. [paper] [video]

- Da Zhao, Haobo Luo, Yuxiao Tu, Chongxi Meng, Tin Lun Lam, “Snail-Inspired Robotic Swarms: A Hybrid Connector Drives Collective Adaptation in Unstructured Outdoor Environments,” Nature Communications, April 29, 2024. [paper] [video]

- Guanqi Liang, Haobo Luo, Ming Li, Huihuan Qian and Tin Lun Lam, “FreeBOT: A Freeform Modular Self-reconfigurable Robot with Arbitrary Connection Point – Design and Implementation,” Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Las Vegas, NV, USA (Virtual), October 25-29, 2020. [paper] [video] [IROS Best Paper Award on Robot Mechanisms and Design]

Collaborative Relative Localization

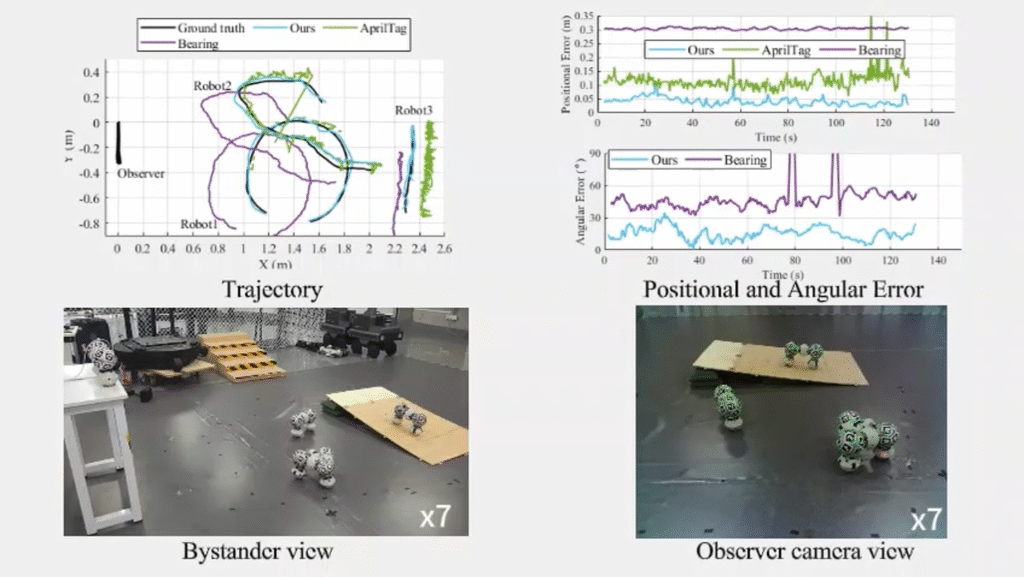

Multi-robot systems require accurate, infrastructure-free relative localization to enable robust coordination, formation control, reconfiguration, and collaborative tasks in GPS-denied or unstructured environments. This project develops self-contained (onboard-only) relative pose estimation methods that achieve high precision across extreme distance scales—from direct contact (0 mm) to over 100 meters—where no single sensing modality suffices. Robots operate independently without fixed anchors or external references, addressing real-world challenges such as variable configurations (especially in modular/self-reconfigurable robots), measurement noise, occlusions, and computational constraints.

The research segments localization by operational range and tailors specialized, complementary approaches:

- Contact-range (0 m, ~1 mm accuracy): Magnetic sensor arrays + graph neural network (GNN)-based detection for precise module connection identification in freeform modular robots.

- Short-range (<5 m, ~1 cm accuracy): Configuration-adaptive visual methods fusing direct vision detection, robust module recognition, and odometry optimization to handle dynamic/unstructured features in spherical or freeform modular systems.

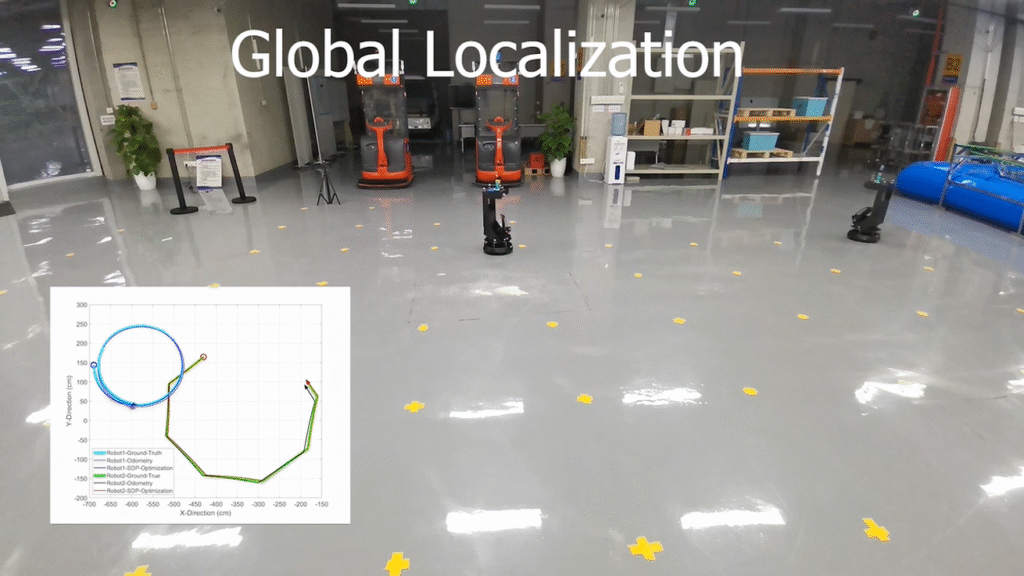

- Middle-range (<50 m, ~10 cm accuracy): Ultra-wideband (UWB) ranging + odometry fusion with asymptotically efficient estimators (e.g., weighted semidefinite relaxation) for robust, theoretically grounded 2D/3D pose recovery under noise and motion errors.

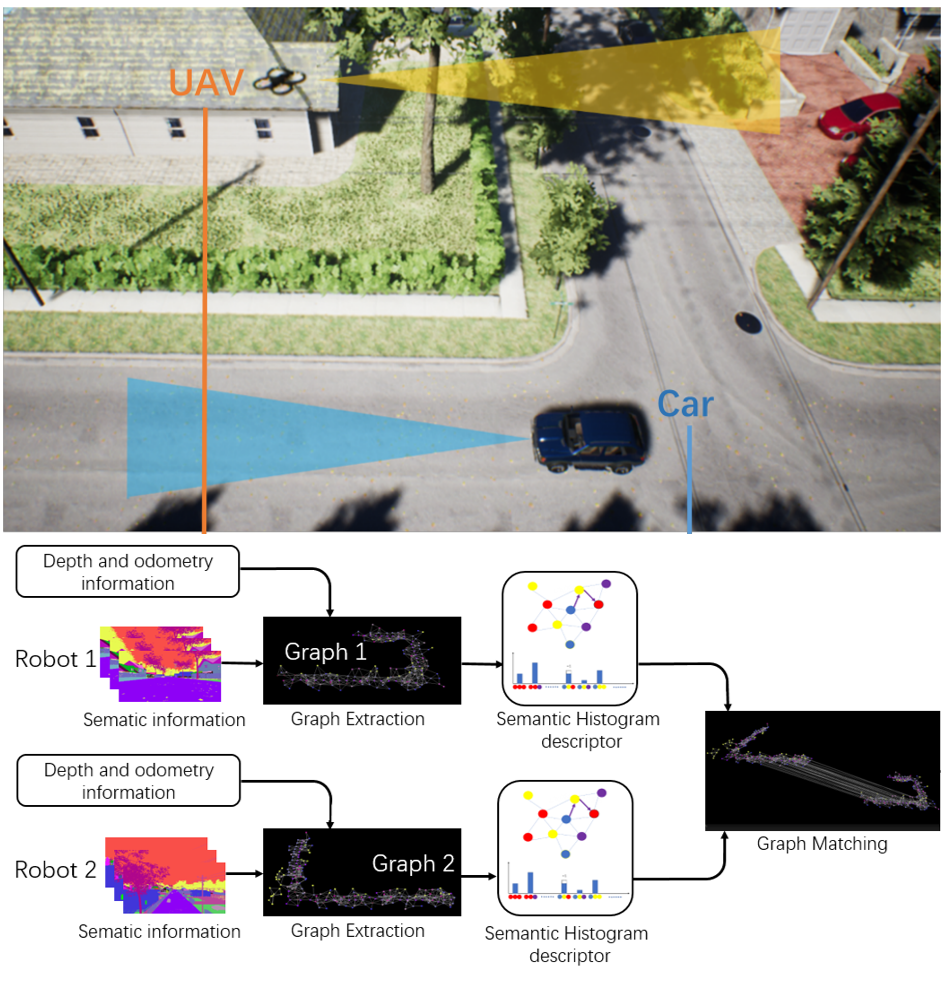

- Long-range (>50 m, ~1 m accuracy): Visual semantic landmarks and graph-based matching for scalable, viewpoint-robust global alignment in large-scale settings.

These multi-modal, self-contained techniques support applications in modular self-reconfigurable swarms, heterogeneous robot teams, search & rescue, exploration, and industrial collaboration, emphasizing low-cost hardware, real-time performance, and adaptability.

Selected Publications:

- Yuming Liu, Qiu Zheng, Yuxiao Tu, Yuan Gao, Guanqi Liang, Tin Lun Lam, “Configuration-Adaptive Visual Relative Localization for Spherical Modular Self-Reconfigurable Robots,” Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Atlanta, USA, May 19 – 23, 2025. [paper] [video] [2025 IEEE ICRA Best Conference Paper Award – Finalist]

- Yuxiao Tu, Tin Lun Lam, “Configuration Identification for a Freeform Modular Self-reconfigurable Robot – FreeSN,” IEEE Transactions on Robotics (T-RO), August 2023. [paper] [video]

- Yue Wang, Muhan Lin, Xinyi Xie, Yuan Gao, Fuqin Deng, Tin Lun Lam, “Asymptotically Efficient Estimator for Range-based Robot Relative Localization,” IEEE/ASME Transactions on Mechatronics (TMECH), June 2023. [paper] [video]

Collaborative Environment Perception

In multi-robot collaborative systems, robust environment perception and seamless data fusion across heterogeneous sensors and platforms are essential for coordinated operation in complex, dynamic settings. This research tackles core challenges in multi-robot perception, focusing on accurate data association and interference mitigation to enable reliable shared understanding of the environment.

Key challenges addressed include:

- Temporal mismatches — Differences in data acquisition times cause variations in lighting, shadows, or scene dynamics, complicating feature matching and fusion.

- Viewpoint diversity — Disparate perspectives from multiple robots lead to geometric and appearance inconsistencies, hindering cross-robot data alignment.

- Mutual visual interference — Robots operating in close proximity introduce occlusions, reflections, or dynamic artifacts (e.g., from motion or lighting), degrading individual and collective perception quality.

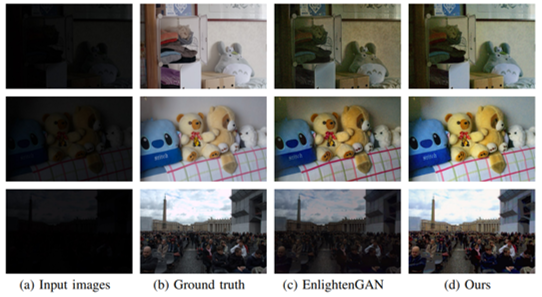

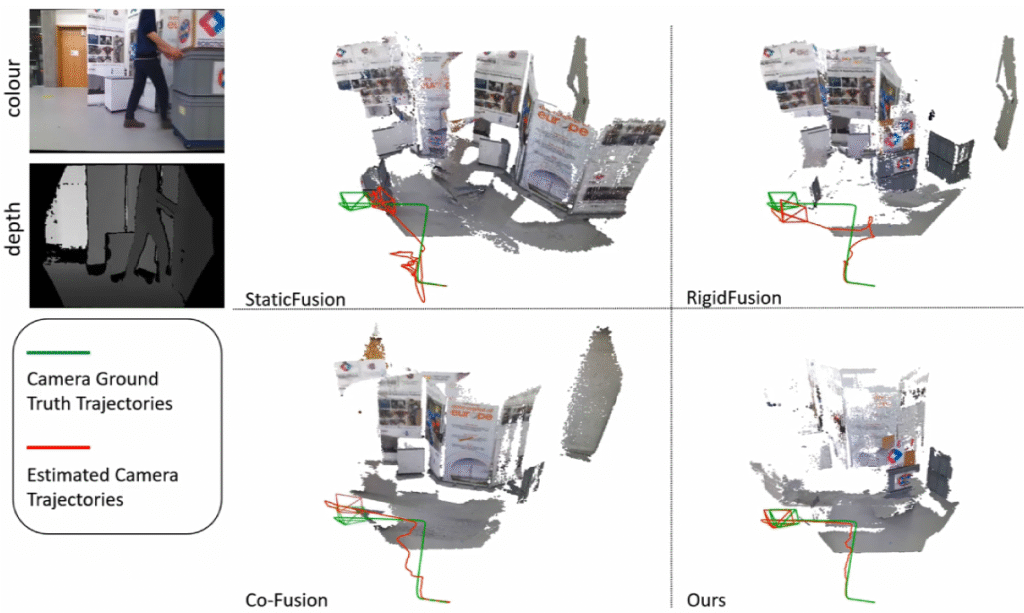

The proposed approaches leverage advanced multi-sensor fusion, semantic-aware graph matching, robust SLAM under dynamic and occluded conditions, low-light enhancement, and knowledge transfer techniques (e.g., ground-to-aerial) to overcome these issues, achieving improved accuracy, real-time performance, and resilience in large-scale or degraded environments.

Selected Publications:

- Junjie Hu, Chenyou Fan, Mete Ozay, Qing Gao, Yulan Guo, Tin Lun Lam, “Robust Depth Estimation Under Sensor Degradations: A Multi-Sensor Fusion Perspective,” IEEE Transactions on Pattern Analysis and Machine Intelligence (TPAMI), June 2025. [paper]

- Junjie Hu, Chenyou Fan, Mete Ozay, Hua Feng, Yuan Gao, Tin Lun Lam, “Unlocking Drone Perception in Low AGL Heights: Progressive Semi-Supervised Learning for Ground-to-Aerial Perception Knowledge Transfer,” IEEE Transactions on Intelligent Transportation Systems (TITS), May 2025. [paper]

- Xiyue Guo, Junjie Hu, Junfeng Chen, Fuqin Deng, Tin Lun Lam, “Semantic Histogram Based Graph Matching for Real-Time Multi-Robot Global Localization in Large Scale Environment,” IEEE Robotics and Automation Letters (RA-L), October 2021. [paper] [code] [video]

- Junjie Hu, Xiyue Guo, Junfeng Chen, Guanqi Liang, Fuqin Deng, Tin Lun Lam, “A Two-stage Unsupervised Approach for Low light Image Enhancement, ”IEEE Robotics and Automation Letters (RA-L), October 2021. [paper] [video]

Mobile Collaboration

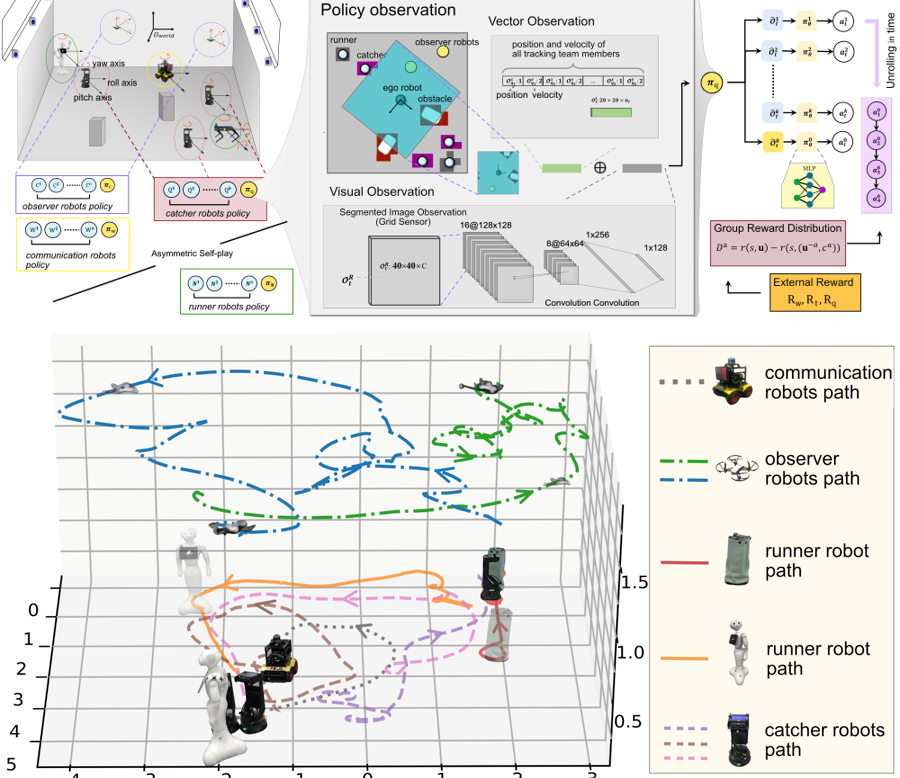

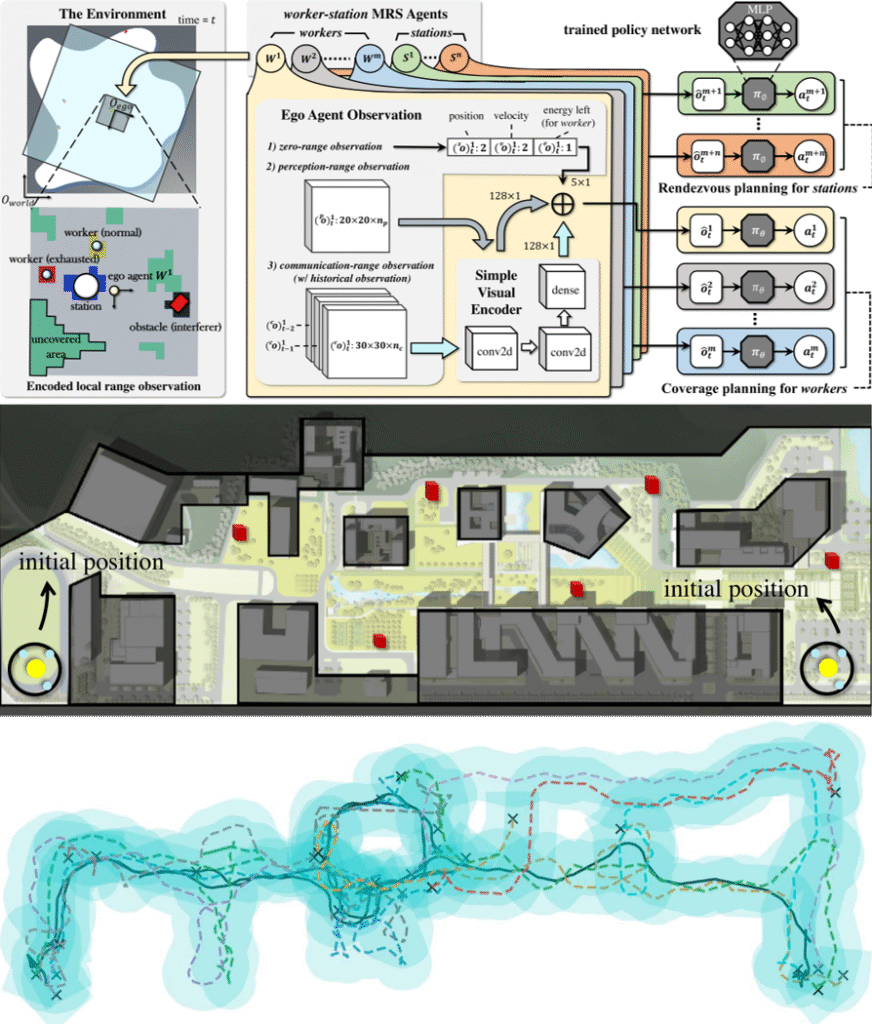

This research develops advanced planning and coordination frameworks for heterogeneous multi-robot systems, where teams of robots with diverse capabilities collaborate to accomplish complex tasks in dynamic, unstructured environments. Key challenges addressed include accurate system modeling under heterogeneity, real-time computation of optimal plans, and adaptive reconfiguration in response to environmental changes or task variations.

The approaches center on:

- Constrained multi-task assignment and scheduling models that account for robot-specific capabilities, temporal constraints, and inter-robot dependencies.

- Hybrid solution methods combining operations research techniques (for exact/near-optimal allocation) with machine learning (e.g., deep multi-agent reinforcement learning, asymmetric self-play, capability matching, meta-RL) for scalable, adaptive performance in high-dimensional or uncertain scenarios.

- Dynamic adaptation mechanisms enabling on-the-fly replanning, coordination learning, and robust execution under disturbances.

These innovations provide theoretical and algorithmic foundations for deploying heterogeneous robot teams in demanding real-world applications, including unmanned mining operations, security and anti-terrorism patrols, dynamic path coverage, human-robot co-adaptation, and collaborative catching/surveillance tasks.

Selected Publications:

- Xi Chen, Yuan Gao, Hangxin Liu, Fangkai Yang, Ali Ghadirzadeh, Jun Yang, Bin Liang, Chongjie Zhang, Tin Lun Lam, Song-Chun Zhu, “Cross-Robot Behavior Adaptation through Intention Alignment,” Science Robotics, March 2026. [paper]

- Yuan Gao, Junfeng Chen, Xi Chen, Chongyang Wang, Junjie Hu, Fuqin Deng, Tin Lun Lam, “Asymmetric Self-Play-Enabled Intelligent Heterogeneous Multirobot Catching System Using Deep Multiagent Reinforcement Learning,” IEEE Transactions on Robotics (T-RO), April 2023. [paper] [video]

- Hoi-Yin Lee, Peng Zhou, Bin Zhang, Liuming Qiu, Bowen Fan, Anqing Duan, Jingtao Tang, Tin Lun Lam, and David Navarro-Alarcon, “A Distributed Dynamic Framework to Allocate Collaborative Tasks Based on Capability Matching in Heterogeneous Multi-Robot Systems,” IEEE Transactions on Cognitive and Developmental Systems (TCDS), April 2023. [paper] [video]

- Jingtao Tang, Yuan Gao, Tin Lun Lam, “Learning to Coordinate for a Worker-Station Multi-robot System in Planar Coverage Tasks,” IEEE Robotics and Automation Letters (RA-L), October 2022. [paper] [video]

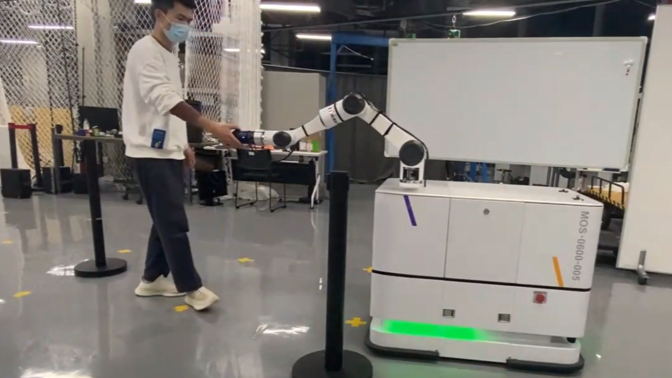

Collaborative Manipulation

This joint project between the University of Edinburgh (UoE, SLMC Group) and the Shenzhen Institute of Artificial Intelligence and Robotics for Society (AIRS) advances fundamental and applied research in AI and robotics. It centers on three interconnected scientific pillars: Multi-Contact Planning and Control, Multi-Agent Collaborative Manipulation, and Robot Perception—enabling teams of mobile manipulators (legged, wheeled, or hybrid platforms) to perform coordinated loco-manipulation tasks that exceed single-robot capabilities, such as handling large/heavy payloads, dynamic object transport, or complex interactions in unstructured environments.

Principal Investigators: Prof. Sethu Vijayakumar (UoE), Prof. Tin Lun Lam (AIRS)

More information: AIRS project page | UoE SLMC project page

Selected Publications:

- Ran Long, Christian Rauch, Tianwei Zhang, Vladimir Ivan, Tin Lun Lam, Sethu Vijayakumar, “RGB-D SLAM in Indoor Planar Environments with Multiple Large Dynamic Objects,” IEEE Robotics and Automation Letters (RA-L), June 2022. [paper] [video]

- Zhangjie Tu, Tianwei Zhang, Lei Yan, Tin Lun Lam, “Whole-Body Control for Velocity-Controlled Mobile Collaborative Robots Using Coupling Dynamic Movement Primitives,” Proceedings of the IEEE-RAS International Conference on Humanoid Robots (Humanoids), Okinawa, Japan, November 28-30, 2022. [paper] [video]

- Xiaoyu Zhang, Lei Yan, Tin Lun Lam, Sethu Vijayakumar, “Task-Space Decomposed Motion Planning Framework for Multi-Robot Loco-Manipulation,” Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Xian, China, May 30 – June 5, 2021. [paper] [video]